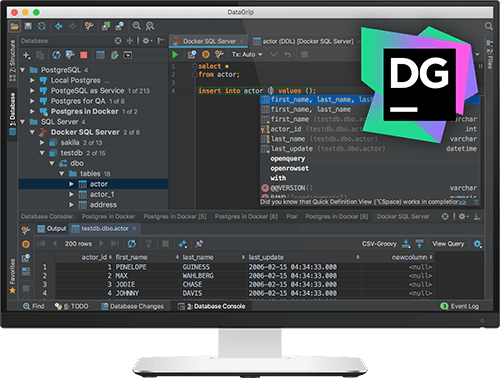

Create a copy of the default JVM options file and change the value of the -Xmx option in it. If you are using a standalone instance not managed by the Toolbox App, and you can't start it, it is possible to manually change the -Xmx option that controls the amount of allocated memory. If the IDE instance is currently running, the new settings will take effect only after you restart it. On the instance settings tab, expand Configuration and specify the heap size in the Maximum heap size field. Open the Toolbox App, click the settings icon next to the relevant IDE instance, and select Settings. If you are using the Toolbox App, you can change the maximum allocated heap size for a specific IDE instance without starting it. This functionality relies on the Performance Testing plugin, which you need to install and enable. Right-click the status bar and select Memory Indicator. Report performance issues Install the Performance Testing plugin. Use it to judge how much memory to allocate. Enable the memory indicatorĭataGrip can show you the amount of used memory in the status bar. If you are not sure what would be a good value, use the one suggested by DataGrip.Ĭlick Save and Restart and wait for DataGrip to restart with the new memory heap setting. For previous versions or if the IDE crashes, you can change the value of the -Xmx option manually as described in JVM options.ĭataGrip also warns you if the amount of free heap memory after a garbage collection is less than 5% of the maximum heap size:Ĭlick Configure to increase the amount of memory allocated by the JVM. The Change Memory Settings action is available starting from DataGrip version 2019.2. Restart DataGrip for the new setting to take effect. This action changes the value of the -Xmx option used by the JVM to run DataGrip. Set the necessary amount of memory that you want to allocate and click Save and Restart. If you are experiencing slowdowns, you may want to increase the memory heap.įrom the main menu, select Help | Change Memory Settings. The default value depends on the platform. to 50 or higher the query will reach the memory limit also with the instance limit.The Java Virtual Machine (JVM) running DataGrip allocates some predefined amount of memory. we used 30 call and that worked fine, but if we increase the number of UDF calls For example, if your query contains too many UDF calls. However, there exist also cases where the instance limit can't help anymore. This query works fine for the 30 UDF calls, which failed before and is much faster than the Python UDF it's also way faster than python for this simple taskĬREATE OR REPLACE lua SCALAR SCRIPT oom_udf(input_value number) confirm the out-of-memory issue doesn't happen with lua This query works fine for the 30 UDF calls, which failed before need to open the schema again after re-connectĬREATE OR REPLACE PYTHON3 SCALAR SCRIPT oom_udf(input_value INTEGER) RETURNS INTEGER AS This query will end with an out-of-memory failure of the query such that UDF call with some input don't get optimized away) this does 30 calls of the UDF (UDFs are treated as function with side-effect, Select oom_udf( 1), oom_udf( 2), oom_udf( 3) Ĭreate or replace table oom_table as select 1 as c0 from values between 1 and 1e5 - 100.000 rows works well for low number of calls on a small data set: confirm version is 7.1 with at least 3 GB DB RAMĬREATE OR REPLACE PYTHON3 SCALAR SCRIPT nr_of_cores() RETURNS INTEGER ASĬREATE OR REPLACE PYTHON3 SCALAR SCRIPT oom_udf (input_value INTEGER) To specify a limit, you can add the option perNodeAndCallInstanceLimit to the UDF as in the following example: Too many UDF calls in a single query, when the summed up main memory consumption for a single instance per Call already would break the memory limit.A single instance of the UDF already consumes too much main memory.Set UDFs which will be called for many groups and need a lot of memory or which get called for many columns.Scalar UDF where each instance requires a lot of main memory.A transformation implemented as Python/Java/R UDF applied to many columns.If multiple Scalar UDF calls are made in a query, you can reduce the number of instances to stay within the memory limit.The following scenarios explain when limiting instances help and when it doesn't: To avoid this scenario, you can specify the limits for UDF instances per node and UDF call.

The main memory for your UDFs is limited and if your queries allow the database to parallelize the UDF with many instances, all instances on the data node may use too much memory and the query might crash. This feature is valid only for Java, Python, and R programming languages.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed